Summary

- Why should you monitor the logins of your company, right now?

- How can BuffaLogs protect you?

- How to install and configure BuffaLogs

- Would you like to collaborate with us?

- Full featured log-in analysis

1. Why should you monitor the logins of your company, right now?

In the last few years, the security related to credential-based authentication systems has considerably been improving, implementing password strength meter and multi-factor authentication. Despite this, credential thefts, the first stage of a credential-based attack, have been increasing, too. In order to gain the victims’ credentials, the attackers can conduct a non-targeted phishing, such as in mass credential harvesting campaigns, or spear-phishing, carefully researching their targets, so as to disguise themselves as trusted senders in a reasonable context. Since credential stealing attacks are mainly based on human errors, these cyber intrusions are not always predictable by hunting systems. For these reasons, the purpose of this project is to provide an Open Source solution for the detection of anomalous logins.

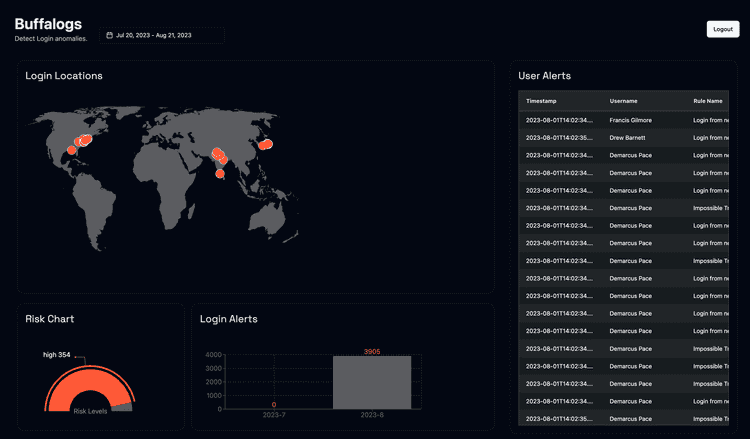

2. How can BuffaLogs protect you?

BuffaLogs detects anomalies on login data: it is an early-warning system that notifies the user, so a login compromission can be seen and stopped before the attacker can do malicious activities.

In particular, the system sends three types of alert:

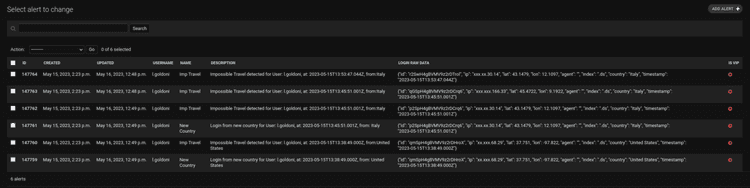

- Impossible Travel: it occurs when a user logs into the system from a significant distance within a range of time that cannot be covered by conventional means of transport.

- Login from new device: this alert is sent if the user utilizes an appliance for the first time.

- Login from a new country: this alarm is dispatched if the system is logged by a user from a country where they have never authenticated before.

According to the triggered alerts, a risk level (no risk, low, medium or high) is assigned to each user of the company, in order to immediately have an overview about the accounts most at risk.

In addition, it is possible to configure some properties concerning the detection to be more accurate:

- a list of the allowed countries, containing the states the users visit the most;

- a list with the vip users of the company, so all the accounts with privilged positions;

- the ignored ips, including all those networks or single IPs to ignore;

- the ignored users in which the users to discard are stored.

3. How to install and configure BuffaLogs

BuffaLogs is a Docker containerized application, so it runs in isolated runtime environments that encapsulate all its dependencies, libraries and configuration file. For this reason, no software download or installation is required except for Docker and the Compose plugin. For example with the sudo apt-get install docker.io docker-compose on Ubuntu.

Once installed, you can download the application directly from the Docker Hub, with the sudo docker pull certego/buffalogs:<release_tag> command.

After that, there are two ways of running BuffaLogs, depending on your system configurations:

- if you already have an elastic cluster:

- set the address of the host into the CERTEGO_ELASTICSEARCH variable in the buffalogs.env file

- launch docker-compose up -d to run the containers

- if you have no hosts with Elasticsearch installed on it, you can run it directly with BuffaLogs:

- run docker-compose -f docker-compose.yml -f docker-compose.elastic.yml up -d in order to execute all the containers, included Elasticsearch and Kibana

- Now elasticsearch and kibana are running on the same host with BuffaLogs.

For now on the IP of the host were BuffaLogs is running will be referenced as BUFFALOGS_IP.

Now, you can start the detection of any type of logs, as long as they are compliant with the ECS - Elastic Common Schema. In particular, the fields used by BuffaLogs are:

{

"_index": "<elastic_index>",

"_id": "<log_id>",

"@timestamp": "<log_timestamp>",

"user": {

"name": "<user_name>"

},

"source": {

"geo": {

"country_name": "<country_origin_log>",

"location": {

"lat": "<log_latitude>",

"lon": "<log_longitude>"

}

},

"ip": "<log_source_ip>"

},

"user_agent": {

"original": "<log_device_user_agent>"

},

"event": {

"type": "start",

"category": "authentication",

"outcome": "success"

}

}

Below you can find an example that helps you to configure the system for monitoring ssh logins.

Monitor ssh logins

For monitoring ssh logins, first of all install Filebeat on every host you want to collect logs; secondly, for inspecting the syslog and authorization logs, it is necessary to set the path in the /etc/filebeat/modules.d/system.yml file:

- module: system

syslog:

enabled: true

var.paths: ["/var/log/syslog*"]

auth:

enabled: true

var.paths: ["/var/log/auth.log*"]

In order to send these events directly to Elasticsearch, set the authentication credentials in the /etc/filebeat/filebeat.yml file:

output.elasticsearch:

hosts: ["https://localhost:9200"]

username: "elastic"

password: "********"

ssl:

enabled: true

verification_mode: "none"

Lastly, start the filebeat service: sudo systemctl start filebeat.service and you can inspect the BuffaLogs detection alerts on the GUI at localhosy:8000 or in the Django admin at localhost:8000/admin/.

Finally, start the filebeat service: sudo systemctl start filebeat.service and you will see login logs on filebeat-* index on Elasticsearch. BuffaLogs detection alerts and info are available on the GUI at BUFFALOGS_IP:8000 or in the Django admin at BUFFALOGS_IP:8000/admin/.

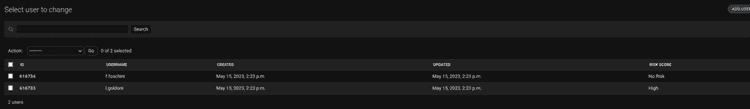

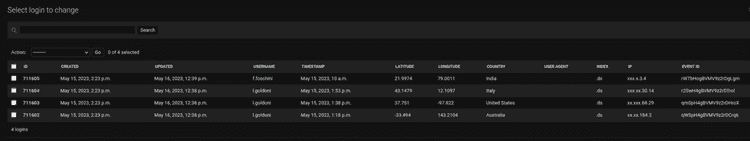

In particular, the results obtained are divided into different tables:

- User: with the list of all the accounts and the relative risk level calculated;

- Login: that contains the logins, unique for country and user-agent

- Alert: with the triggered alert and the relative login information

BuffaLogs updates its data once every 30 minutes, so you have to wait a little bit to see some data. Otherwise, if you want to launch the detection manually, you can run the different management commands available, depending on your purpose:

-

to start the detection for the last 30 minutes, run python ./manage.py impossible_travel

-

to execute the detection for a given date, use python ./manage.py impossible_travel <start_date> <end_date>, for example: **python ./manage.py impossible_travel 2023-05-25 8:30:00 2023-05-25 14:30:00`

4. Full featured log-in analysis in PanOptikon®

Knowledge and technology used to build BuffaLogs are already integrated on Certego PanOptikon®, our proprietary Security Orchestration, Automation, and Response (SOAR) platform that enables real-time monitoring, incident analysis, and response activities, as well as direct interaction between the client's IT team and Certego's Detection & Response team. This will allow Certego to have full control over its detection mechanism and it can be personalized for the needs of your organization. Leveraging the information provided by our sensors and Threat Intelligence platform we have tailored BuffaLogs detection around our customers data: this allowed us to reduce false positive and improved detection compared to other solutions.

5. Would you like to collaborate with us?

In Certego, we strongly believe in the developers community. In the past, we have already released several open-source solutions, such as IntelOwl, which is Certego's Open Source Intelligence (#OSINT) solution. It has been a long-standing presence in the top 5 of the most famous open-source Threat Intelligence projects on the #GitHub platform, with over 2800 reviews. For this reason, BuffaLogs' basic detection logics were published with an open source license. In addition, BuffaLogs has attended the Google Summer of Code 2023 and one of the students is improving the graphical interface of the application, adapting it to the Certego UI. For more contributions or ideas, please visit the BuffaLogs GitHub page.